The Regression Algorithm Cheat Sheet: Master 10 Essential Methods

Bookmark this visual guide you’ll reference it constantly

Regression algorithms help us understand how a target variable changes with respect to one or more input variables. After we estimate the model’s parameters, we can see how shifts in one variable influence another. Because regression is used so often in data science, it’s important to know the different types so you can clearly state which method you’re applying and choose the one that fits your problem.

The 10 Core Regression Algorithms at a Glance

Here are ten of the most standard regression algorithms described with their core mechanics:

Linear Regression

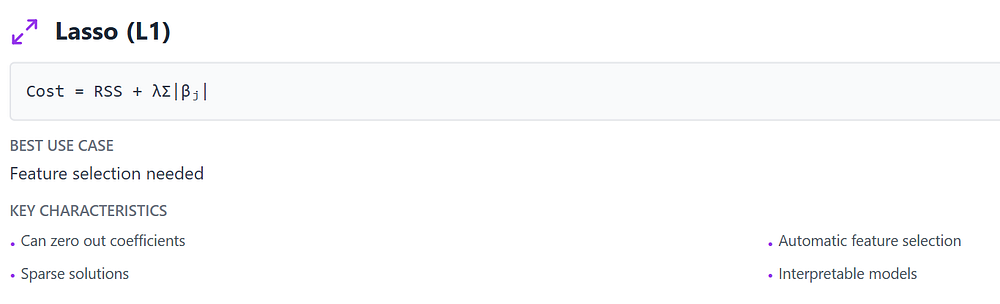

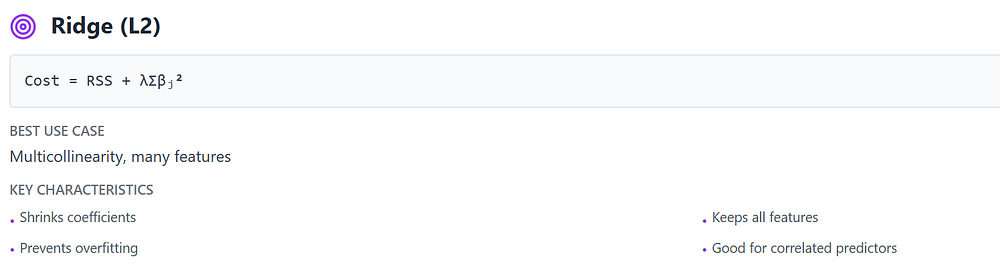

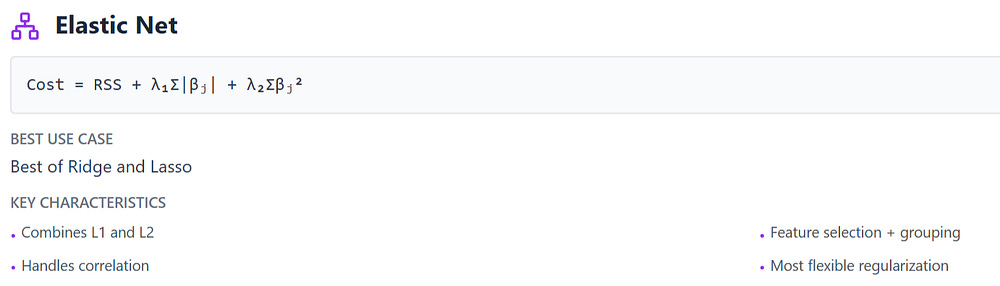

Regularized Regression

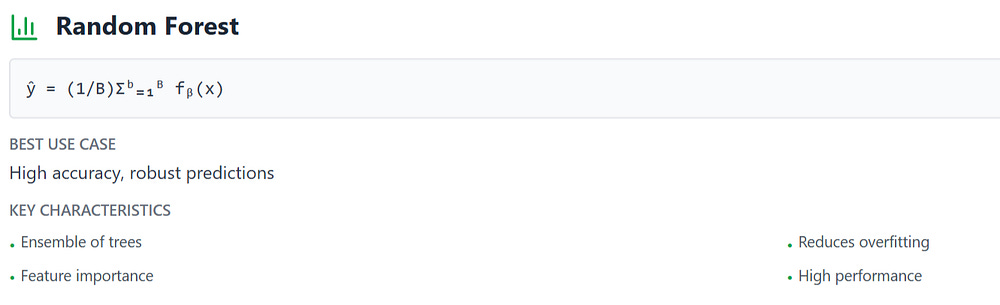

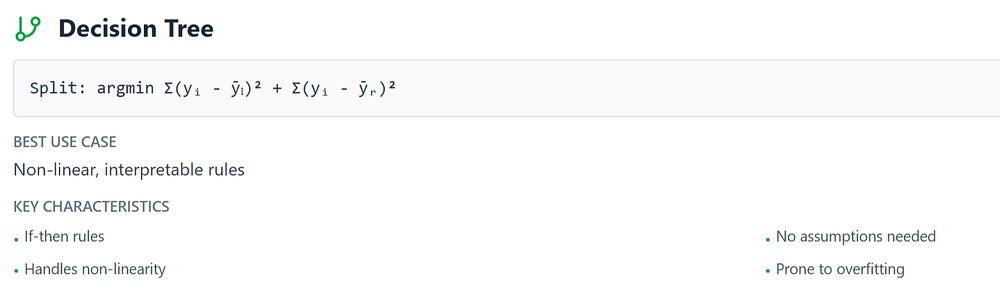

Tree-Based Regression

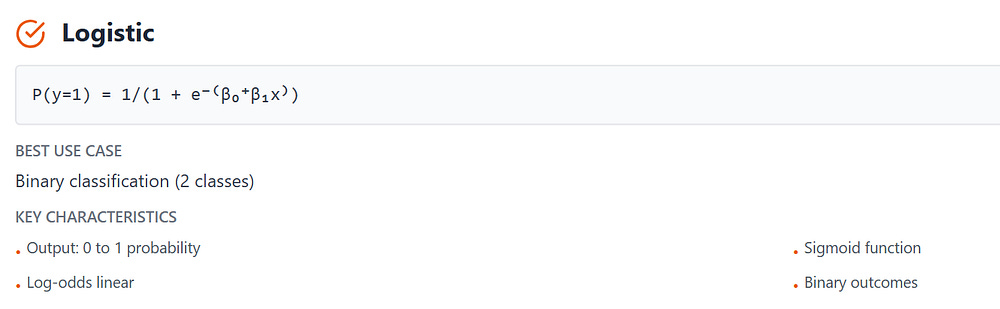

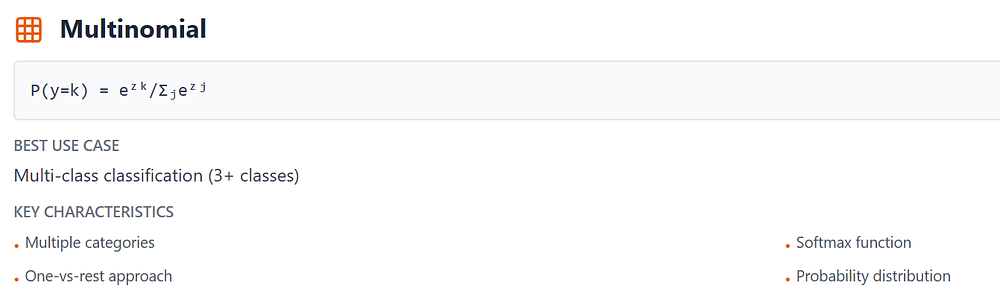

Categorical Probability

A clear and friendly guide to understanding linear regression — one of the most important tools in data science:

Linear Regression in Machine Learning: Simple Explanation with Real-World Examples

If you’re starting out in machine learning or preparing for analytics interviews, this is a must-read.

Quick Reference Guide

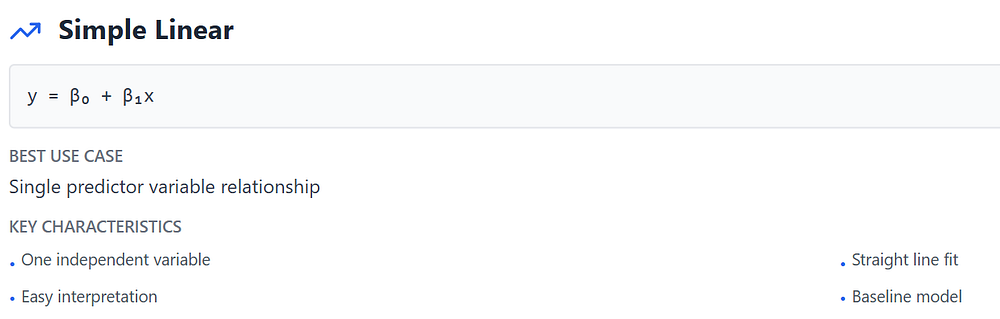

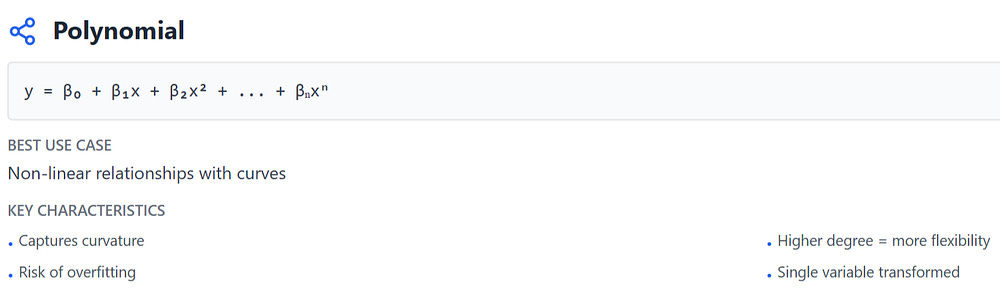

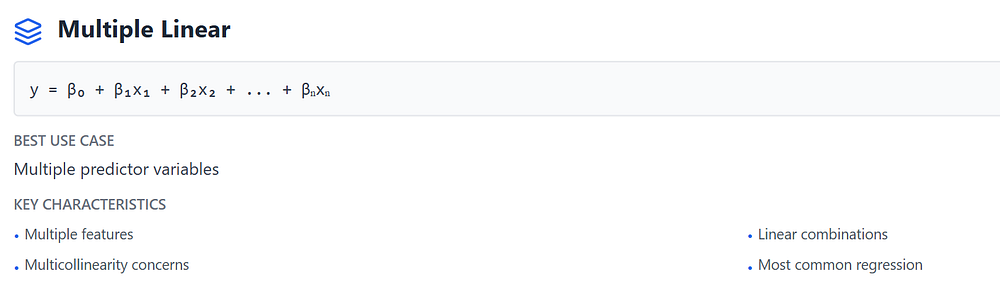

Linear Methods

Foundation models, assumes linear relationships

Regularized Methods

Prevent overfitting through coefficient penalties

Tree Methods

Non-parametric, handles complex patterns

Classification Methods

Predicts categories through probabilities